Some common causes of a 500 error include permission error, 3rd party plug-in issue, misconfiguration of. 500 internal server error: This code refers to a situation in which there is a problem with the website’s server.403 forbidden: This happens when a web server forbids the SEO bots request to view the page.301 moved permanently: This code indicates that the URL has permanently been moved to a different URL.If the page is important or brings in traffic, this should be addressed immediately. 404 file not found: These are often referred to as broken links or dead links, meaning the server couldn’t find the requested source.

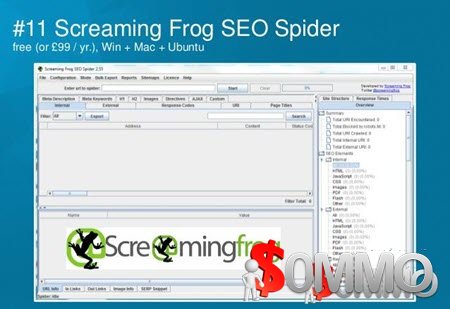

The most common status codes that SEO specialists look out for include: Crawlers should provide a list of the status codes within your website structure, so you can then ensure that these codes are correct and rectify any errors when necessary. HTTP status codes impact the indexing of websites, shedding light on whether URLs or content have been moved or if the server is facing any issues. HTTP response status codesĪnalyzing a website’s HTTP status code is an important part of a search engine optimization technical audit. Non-indexable pages are pages that are not indexed by search engines.Ī spider should provide you with the indexability status of your URLs so as to ensure that you are not missing out on any ranking opportunities for pages that are not indexed (but you thought they were). Indexable pages are pages that can be found, analyzed, and indexed by search engines. Your tool must be able to identify canonicalized pages, pages without a canonical tag and unlinked canonical pages so that you can catch any misplacements early on. These tags will help in preventing duplicate content. SEO crawlers should be able to analyze websites and provide a solution for the following core issues: Canonical tagsĬanonical tags are a powerful way to let search engines know which pages you want them to index. The best technical SEO crawler would depend on the features you need and the size of the enterprise or clients you work with. It’s worth mentioning that there is no one crawler that rules them all. Key considerations when choosing an SEO crawling toolīelow is a list of some key features we look for when comparing and assessing the different solutions available on the market. An SEO-optimized website increases your chances of ranking organically on search engines. This data helps SEO professionals and web developers build and maintain websites that search engines can easily crawl. The crawler works as a bot that visits every page on your website following commands provided by robots.txt file and then extracts data to bring data back to you. An SEO spider is a technical auditing tool used by SEO professionals to understand the website, collect information and find critical issues.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed